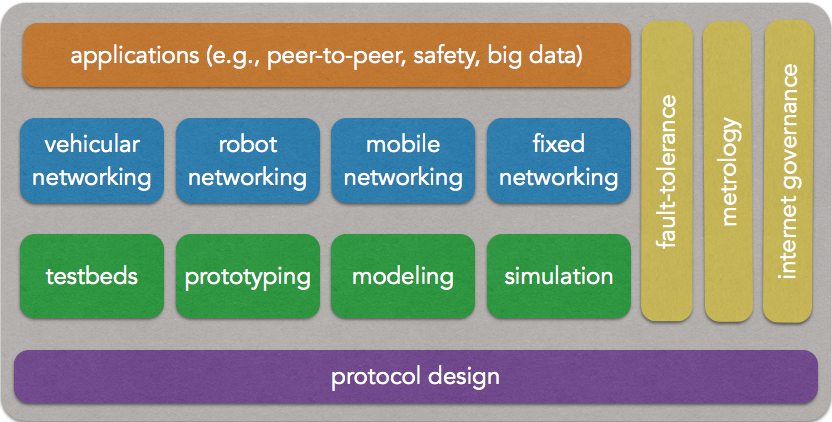

NPA team aims at developing a vision for the future Internet as well as designing solutions to shape and manage it. The target of the team is the control of ubiquitous, mobile and versatile networks that expand everywhere in our private and professional environments. The core of our work concerns problems related to multimedia and mobile networks, resource management, scalability, ambient networks, and content networking. Moreover, significant work is developed in the area of Internet measurement, modeling and traffic engineering.

Fault-tolerant networks

Most of classical distributed fault tolerant techniques do not scale well. Using them would result to mechanisms that either consume too many resources (memory, processing time, etc.), or are an overkill to solve the problem. Our research agenda is to revisit forward recovery mechanisms and envision refinements that are suited to scalability and dynamicity, such as Byzantine containment, selfish stabilization, systemic stabilization or predicate preserving stabilization.

Mobile networked systems

Changes of behavior of users facing the evolution of communicating computing systems, as well as the development of small sized and better performing devices modifies little by little the way networks operate. Users become more and more nomad and desire more freedom while still wishing to preserve the maximum of simplicity. In this new world of applications and services, it seems now possible to establish self-organizing networks (SONs) in a spontaneous manner. Ad hoc networks, sensors networks and the mesh networks represent some examples of self-organizing networks. The main characteristics of SONs, which differentiate them from traditional networks, are the absence of management infrastructure (for instance, no centralized addressing system is available) as well as the dynamics of the nodes. Other characteristics are the absence of a geographical positioning system, the limited and variable capacity of wireless links, and the spontaneous nature of the resulting topology. We believe it is now important to investigate the relationship between the different components of the network architecture in order to suitably treat the new challenges raised by SONs and, in particular, to ensure the scalability.

Governance

The PolyTIC research activity explores issues related to political, legal, social and technical stakes of information and communication sciences and techniques. Dealing with relationships between ICTs, public policies and the public space, PolyTIC current research axes include Internet technical governance and political governement, the role of ICTs in the transformation of the rule of law, privacy and personal data protection issues and communication usages in a mobile campus. In summary, the PolyTIC activity focuses on the governance of electronic networks and their usages, following a multi-disciplinary approach.

Content networks

Major evolution in networks and in their usage are driven by the applications. In this context, we aim at studying and developing new architectures to support the transmission of new data traffics. Especially, we take interest in continuous dataflows. Target applications could be live video (P2PTV or IPTV), massive multiplayer online games, sensor networks etc... These activities take place in the context of the french "pole de compétitivité IMVN" (Cluster related to Image, Multimedia and Digital Life located in the Paris Area).

Metrology

Our metrology research employs both passive and active network measurements. We measure both classic wired networks and new wireless environments. The passive measurement work aims at understanding traffic flows in large backbones and corporate networks. We develop techniques to identify and classify these flows with the aims of helping network management and of recognizing and responding to security threats. For instance, we have created algorithms that rapidly and reliably determine which flows are the 'elephants' (the few and large), and which are the ÒmiceÓ (the many and small), and we have tackled the difficult problem of classifying those flows that fall in the middle. As another example, we have found efficient ways of estimating the traffic matrix for a network, through a combination of modeling and judicious flow sampling. In the security area, we are looking at ways of discerning attack traffic among all the packets that enter a network. In active measurements, we consider problems relating to the topology of graphs of the internet, as well as geolocalization of hosts in the internet. Our focus in topology measurement has been on how to improve the efficiency of measurements using route traces. We are now bringing intra-domain and inter-domain routing knowledge to bear on the problem. For geolocalization, we have come up with improved methods for situating a host using pings towards the host and towards a set of reference landmarks. Our wireless work has been informed by measurements of bit-loss rates and packet-loss rates, using such measurements as a basis for developing more effective channel coding techniques. We are also using measurements of traffic on wireless networks as a basis for modeling new traffic generators for network simulation.

Modeling

The pressure of the economic stakes is such these days that the performance evaluation of systems has become an inescapable and highly strategic domain. In fact, it is inconceivable to construct any system (whether it be a computing system, a communication network or a manufacturing system) without first conducting performance analyses; an undersized system will be unusable, and an oversized system will be financially wasteful. Research at the Networks and Performance Analysis group focuses on the Internet of the future and aims at developing solutions in order to build it and dominate it. To this end, the goal of this activity is to develop analytical models for both wired and wireless communication networks, in order to evaluate their performance and help in dimensioning them. Several research axes are currently under investigation and concern wired networks, ad hoc networks and cellular networks (GSM/GPRS/EDGE/UMTS). The objectives of all these axes are always to develop simple models in order to provide both a meaningful qualitative analysis of the fundamental behavior of the system and a fast quantitative analysis of its performance. Most of the techniques that are used come from the Stochastic Processes, Markov Chain and Queuing theories.